Published 28 April 2026

In this blog, Janniek Starren explores why treating AI agents as applications rather than identities creates governance blind spots that existing frameworks already know how to solve. What Microsoft’s new Agent 365 and Entra Agent ID actually change. And why the real risk is not the rogue agent but the forgotten one.

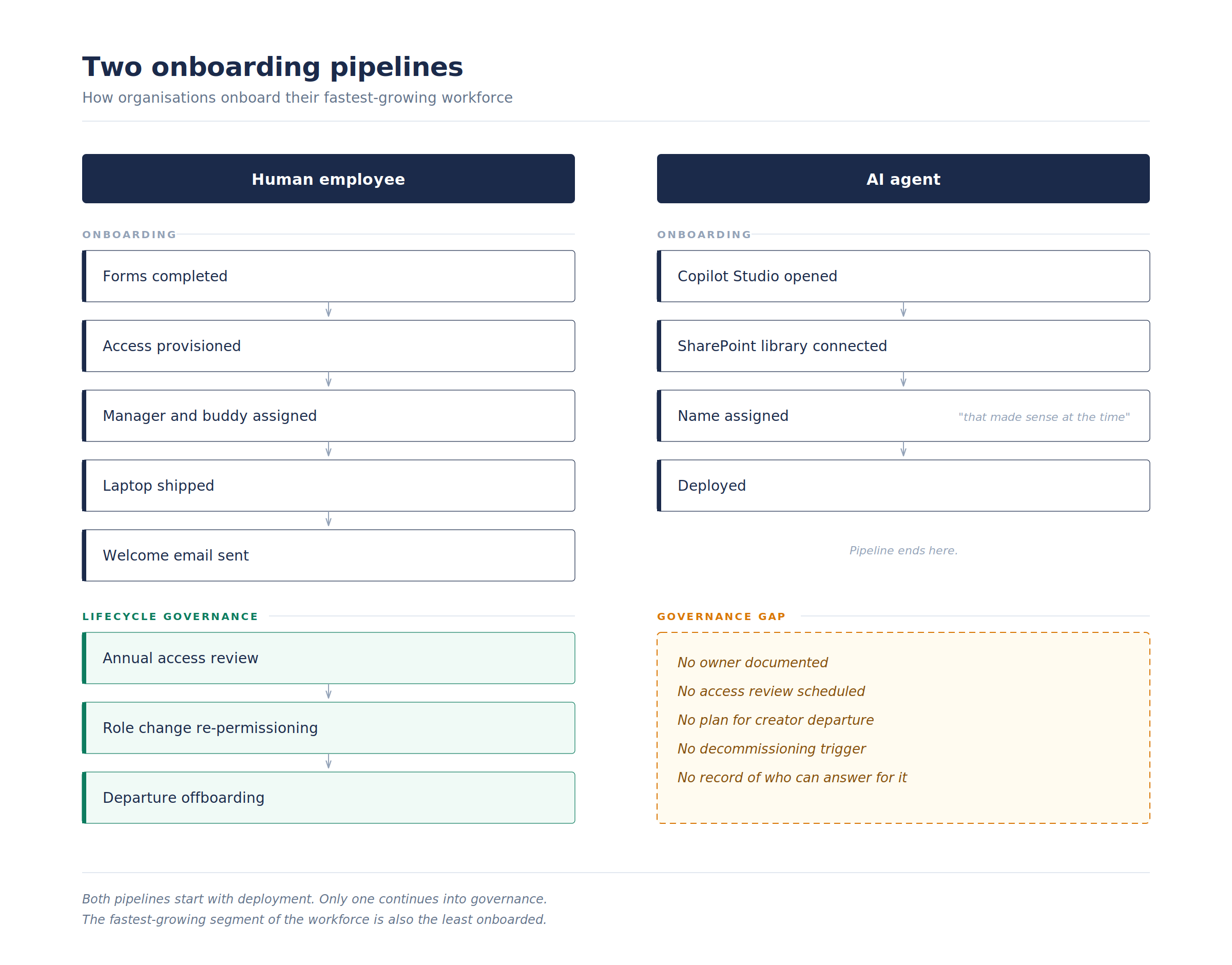

Every organisation has an employee onboarding process. Forms to complete. Access to provision. A manager and buddy assigned. A laptop shipped, eventually. Depending on the organisation, perhaps even a welcome email that someone reads.

Now consider how the last AI agent in your environment was created. Someone opened Copilot Studio, pointed it at a SharePoint library, gave it a name that made sense at the time, and moved on. No owner documented. No access review scheduled. No plan for what happens when that someone leaves.

We would never onboard a human this way. The compliance team would have words. Firmly worded words, probably in a document that itself has a retention policy.

And yet, this is how most organisations onboard the fastest-growing segment of their workforce.

The colleague without a contract

Machine identities outnumber human identities in enterprise environments by roughly 82 to one. Service accounts, API keys, automated workflows, all accumulating quietly for years like digital sediment. AI agents are the latest addition, and the first that can meaningfully be described as autonomous.

Previous non-human identities were passive. A service account authenticates. An API key grants access. They do one thing, repeatedly, without opinions about it. AI agents make decisions, adapt behaviour based on context, and interact with data in ways that shift depending on the prompt, the model version, and whatever optimisation function they happen to be pursuing that particular Tuesday.

An API key is a tool. An AI agent is a colleague with no employment contract and an unsettling amount of initiative.

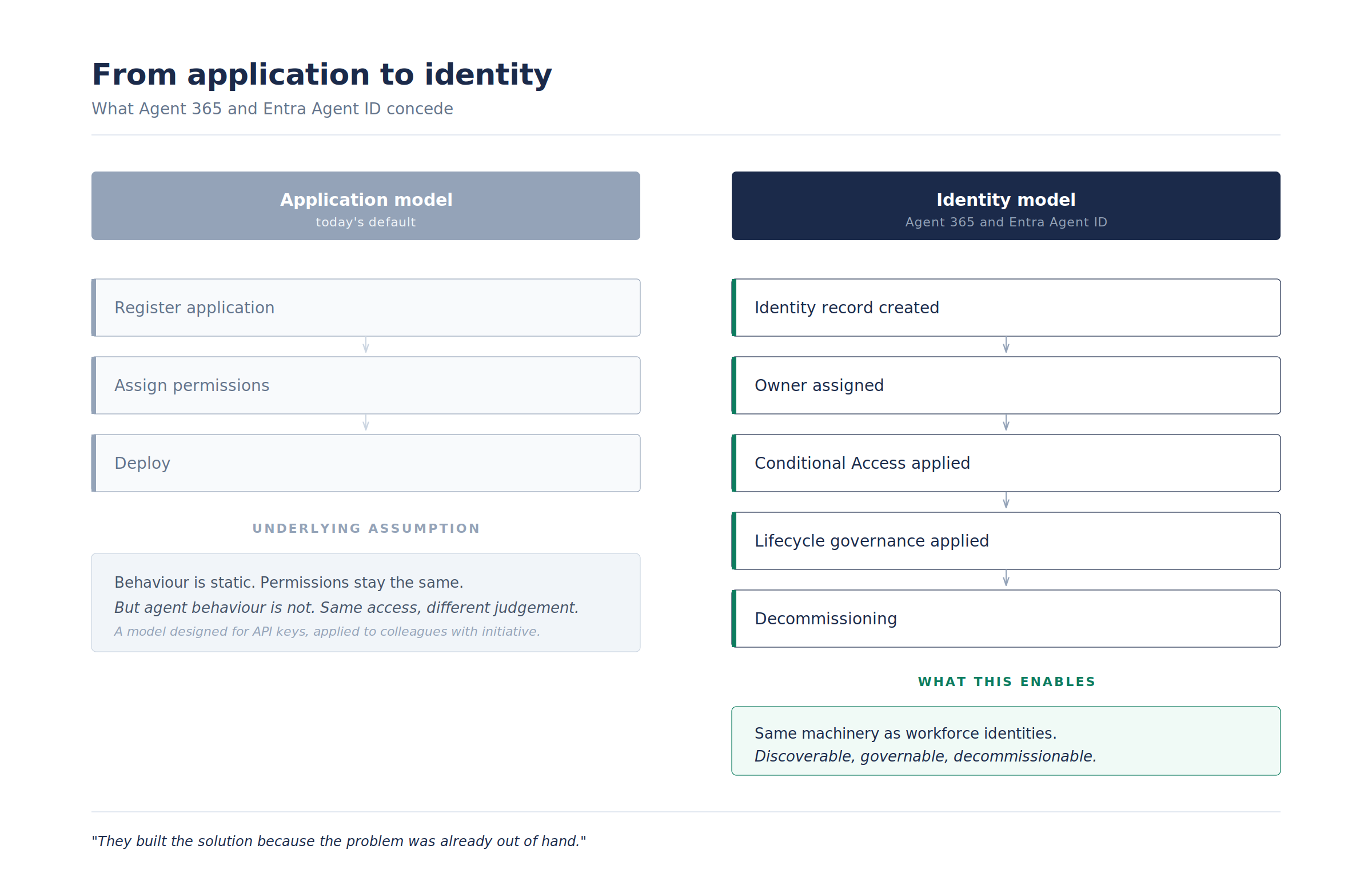

Most organisations govern these entities through the application registration process. Create the app, assign permissions, move on. But application governance assumes static behaviour. With agents, the permissions stay the same but the behaviour changes. Same access, different judgement.

Most organisations cannot answer basic questions about their agent population. How many exist? Who owns each one? What happens when their creator leaves? These are not exotic governance questions. They are the same questions every HR department answers for humans. The difference is that nobody has assigned responsibility for asking them about machines.

What Microsoft just quietly admitted

Microsoft’s introduction of Agent 365 and Entra Agent ID is interesting less for what it builds than for what it concedes. By creating identity infrastructure specifically for AI agents, Microsoft is acknowledging what practitioners have been saying for months: the application model is not fit for purpose when applications start thinking for themselves.

Agents now become first-class identities within the directory. Each gets a tracked, immutable identity record. Conditional Access policies govern agent behaviour alongside human sign-ins. The Agent Registry provides a centralised catalogue that surfaces both sanctioned agents and, rather more usefully, the shadow agents that nobody knew were there.

The architecture extends the same lifecycle governance patterns used for workforce identities to non-human actors: blueprints, identity records, access packages. Organisations can discover, govern, and decommission agents using tools that already exist in Entra.

The best thing about it is also the most telling: they built the solution because the problem was already out of hand.

For M365 environments, the immediate value is visibility. Open the Entra admin centre and filter for Agent ID application types. The resulting list tends to be educational. Occasionally sobering.

The lifecycle gap

Security teams frame agent governance as a security problem, which is understandable given that ungoverned agents create genuine risk. But security treats the symptom. Lock down agent permissions, and developers create new agents through personal accounts. Tighten further. They find workarounds. Banning AI agents works about as well as banning USB drives did. Which is to say, it works until someone needs to get something done.

The deeper gap is lifecycle.

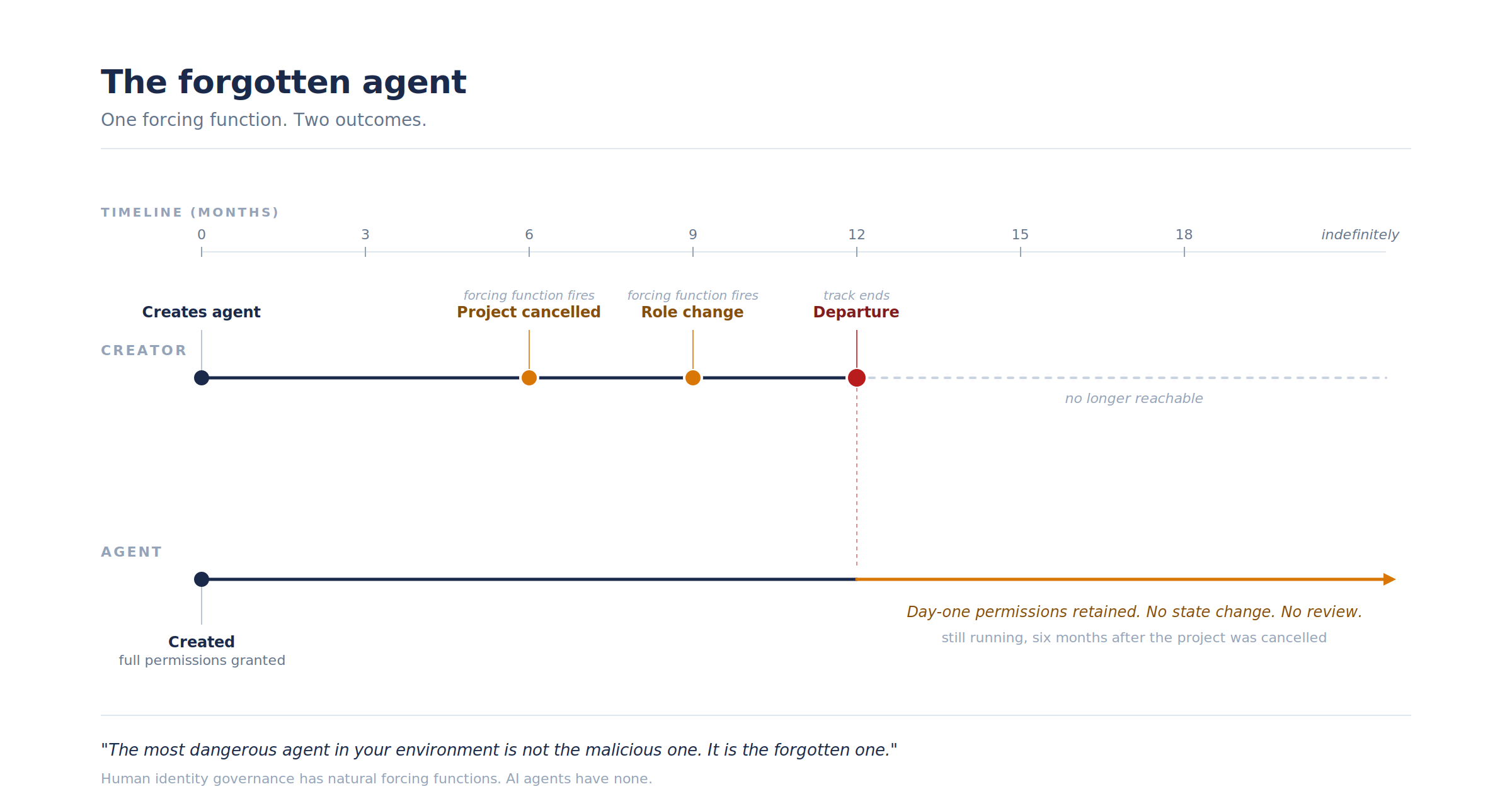

Human identity governance has natural forcing functions. People leave. Roles change. Annual access reviews happen, grudgingly, because compliance requires them. Imperfect, occasionally theatrical, but they create friction that prevents indefinite access accumulation.

AI agents have no natural lifecycle forcing function. An agent created for a specific project continues running after the project ends. An agent built by someone who left six months ago continues accessing data with day-one permissions. Unlike a human who stops showing up, an agent keeps working indefinitely. Silently. Compliantly. With the quiet diligence of someone who does not know the project was cancelled in October.

The most dangerous agent in your environment is not the malicious one. It is the forgotten one.

Four things worth doing now

Four things to consider:

- Inventory what exists. Use Entra Agent ID to discover the agent identities in your directory. The moment of realisation tends to be motivating.

- Assign ownership to every agent. Every human identity has a manager. Every agent needs a sponsor. Someone accountable for its continued existence, its access, its behaviour. Without ownership, governance is aspiration dressed as policy.

- Apply lifecycle governance to non-human identities. Include agents in existing access review processes. Ninety days of inactivity warrants investigation. Creator departure warrants immediate action. The machinery for this already exists in most M365 environments. The recognition that it applies to agents does not.

- Make the governed path the easy path. The single most effective way to reduce shadow AI is to provide sanctioned alternatives that actually meet the need. If the governed environment is slower and more restrictive than a personal ChatGPT account, governance will fail regardless of how many policies you publish. Build the on-ramp before building the fence.

Governance before chaos

Connected Intelligence has always positioned human judgement as the compass. Agent identities test that principle directly. The question is no longer just how humans and systems work together. It is how humans govern systems that work independently, continuously, without waiting to be told what matters.

The agents will keep coming. The governance question is not whether to permit them, that decision was made the moment someone needed something done faster than the ticket queue allowed. The question is whether governance keeps pace, or whether the organisation discovers it has more autonomous systems than people who remember creating them.

Governance is not a constraint on innovation. It is what makes innovation survivable.

How many agents are running in your environment right now? Who owns them? And what is the offboarding process when they are no longer needed?

If the answer to any of those is “not sure,” the governance gap is already open.

About the author:

Janniek Starren leads Engage Squared’s AI Business Solutions, focused on how organisations move from collaboration tools to genuine organisational intelligence, and what changes when AI stops being a feature and starts being infrastructure. His writing here covers architecture, governance, and judgment calls that decide whether any of it holds together. For more on Janniek’s thoughts, sign up to his LinkedIn newsletter here.